Releases: huggingface/transformers

Release v4.41.1 Fix PaliGemma finetuning, and some small bugs

Release v4.41.1

Fix PaliGemma finetuning:

The causal mask and label creation was causing label leaks when training. Kudos to @probicheaux for finding and reporting!

- a755745 : PaliGemma - fix processor with no input text (#30916) @hiyouga

- a25f7d3 : Paligemma causal attention mask (#30967) @molbap and @probicheaux

Other fixes:

- bb48e92: tokenizer_class = "AutoTokenizer" Llava Family (#30912)

- 1d568df : legacy to init the slow tokenizer when converting from slow was wrong (#30972)

- b1065aa : Generation: get special tokens from model config (#30899) @zucchini-nlp

Reverted 4ab7a28

v4.41.0: Phi3, JetMoE, PaliGemma, VideoLlava, Falcon2, FalconVLM & GGUF support

New models

Phi3

The Phi-3 model was proposed in Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone by Microsoft.

TLDR; Phi-3 introduces new ROPE scaling methods, which seems to scale fairly well! A 3b and a

Phi-3-mini is available in two context-length variants—4K and 128K tokens. It is the first model in its class to support a context window of up to 128K tokens, with little impact on quality.

JetMoE

JetMoe-8B is an 8B Mixture-of-Experts (MoE) language model developed by Yikang Shen and MyShell. JetMoe project aims to provide a LLaMA2-level performance and efficient language model with a limited budget. To achieve this goal, JetMoe uses a sparsely activated architecture inspired by the ModuleFormer. Each JetMoe block consists of two MoE layers: Mixture of Attention Heads and Mixture of MLP Experts. Given the input tokens, it activates a subset of its experts to process them. This sparse activation schema enables JetMoe to achieve much better training throughput than similar size dense models. The training throughput of JetMoe-8B is around 100B tokens per day on a cluster of 96 H100 GPUs with a straightforward 3-way pipeline parallelism strategy.

- Add JetMoE model by @yikangshen in #30005

PaliGemma

PaliGemma is a lightweight open vision-language model (VLM) inspired by PaLI-3, and based on open components like the SigLIP vision model and the Gemma language model. PaliGemma takes both images and text as inputs and can answer questions about images with detail and context, meaning that PaliGemma can perform deeper analysis of images and provide useful insights, such as captioning for images and short videos, object detection, and reading text embedded within images.

More than 120 checkpoints are released see the collection here !

VideoLlava

Video-LLaVA exhibits remarkable interactive capabilities between images and videos, despite the absence of image-video pairs in the dataset.

💡 Simple baseline, learning united visual representation by alignment before projection

With the binding of unified visual representations to the language feature space, we enable an LLM to perform visual reasoning capabilities on both images and videos simultaneously.

🔥 High performance, complementary learning with video and image

Extensive experiments demonstrate the complementarity of modalities, showcasing significant superiority when compared to models specifically designed for either images or videos.

- Add Video Llava by @zucchini-nlp in #29733

Falcon 2 and FalconVLM:

Two new models from TII-UAE! They published a blog-post with more details! Falcon2 introduces parallel mlp, and falcon VLM uses the Llava framework

- Support for Falcon2-11B by @Nilabhra in #30771

- Support arbitrary processor by @ArthurZucker in #30875

GGUF from_pretrained support

You can now load most of the GGUF quants directly with transformers' from_pretrained to convert it to a classic pytorch model. The API is simple:

from transformers import AutoTokenizer, AutoModelForCausalLM

model_id = "TheBloke/TinyLlama-1.1B-Chat-v1.0-GGUF"

filename = "tinyllama-1.1b-chat-v1.0.Q6_K.gguf"

tokenizer = AutoTokenizer.from_pretrained(model_id, gguf_file=filename)

model = AutoModelForCausalLM.from_pretrained(model_id, gguf_file=filename)We plan more closer integrations with llama.cpp / GGML ecosystem in the future, see: #27712 for more details

- Loading GGUF files support by @LysandreJik in #30391

Transformers Agents 2.0

v4.41.0 introduces a significant refactor of the Agents framework.

With this release, we allow you to build state-of-the-art agent systems, including the React Code Agent that writes its actions as code in ReAct iterations, following the insights from Wang et al., 2024

Just install with pip install "transformers[agents]". Then you're good to go!

from transformers import ReactCodeAgent

agent = ReactCodeAgent(tools=[])

code = """

list=[0, 1, 2]

for i in range(4):

print(list(i))

"""

corrected_code = agent.run(

"I have some code that creates a bug: please debug it and return the final code",

code=code,

)Quantization

New quant methods

In this release we support new quantization methods: HQQ & EETQ contributed by the community. Read more about how to quantize any transformers model using HQQ & EETQ in the dedicated documentation section

- Add HQQ quantization support by @mobicham in #29637

- [FEAT]: EETQ quantizer support by @dtlzhuangz in #30262

dequantize API for bitsandbytes models

In case you want to dequantize models that have been loaded with bitsandbytes, this is now possible through the dequantize API (e.g. to merge adapter weights)

- FEAT / Bitsandbytes: Add

dequantizeAPI for bitsandbytes quantized models by @younesbelkada in #30806

API-wise, you can achieve that with the following:

from transformers import AutoModelForCausalLM, BitsAndBytesConfig, AutoTokenizer

model_id = "facebook/opt-125m"

model = AutoModelForCausalLM.from_pretrained(model_id, quantization_config=BitsAndBytesConfig(load_in_4bit=True))

tokenizer = AutoTokenizer.from_pretrained(model_id)

model.dequantize()

text = tokenizer("Hello my name is", return_tensors="pt").to(0)

out = model.generate(**text)

print(tokenizer.decode(out[0]))Generation updates

- Add Watermarking LogitsProcessor and WatermarkDetector by @zucchini-nlp in #29676

- Cache: Static cache as a standalone object by @gante in #30476

- Generate: add

min_psampling by @gante in #30639 - Make

Gemmawork withtorch.compileby @ydshieh in #30775

SDPA support

- [

BERT] Add support for sdpa by @hackyon in #28802 - Add sdpa and fa2 the Wav2vec2 family. by @kamilakesbi in #30121

- add sdpa to ViT [follow up of #29325] by @hyenal in #30555

Improved Object Detection

Addition of fine-tuning script for object detection models

- Fix YOLOS image processor resizing by @qubvel in #30436

- Add examples for detection models finetuning by @qubvel in #30422

- Add installation of examples requirements in CI by @qubvel in #30708

- Update object detection guide by @qubvel in #30683

Interpolation of embeddings for vision models

Add interpolation of embeddings. This enables predictions from pretrained models on input images of sizes different than those the model was originally trained on. Simply pass interpolate_pos_embedding=True when calling the model.

Added for: BLIP, BLIP 2, InstructBLIP, SigLIP, ViViT

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

image = Image.open(requests.get("https://huggingface.co/hf-internal-testing/blip-test-image/resolve/main/demo.jpg", stream=True).raw)

processor = Blip2Processor.from_pretrained("Salesforce/blip2-opt-2.7b")

model = Blip2ForConditionalGeneration.from_pretrained(

"Salesforce/blip2-opt-2.7b",

torch_dtype=torch.float16

).to("cuda")

inputs = processor(images=image, size={"height": 500, "width": 500}, return_tensors="pt").to("cuda")

predictions = model(**inputs, interpolate_pos_encoding=True)

# Generated text: "a woman and dog on the beach"

generated_text = processor.batch_decode(predictions, skip_special_tokens=True)[0].strip()- Blip dynamic input resolution by @zafstojano in #30722

- Add dynamic resolution input/interpolate pos...

v4.40.2

Fix torch fx for LLama model

Thanks @michaelbenayoun !

v4.40.1: fix `EosTokenCriteria` for `Llama3` on `mps`

v4.40.0: Llama 3, Idefics 2, Recurrent Gemma, Jamba, DBRX, OLMo, Qwen2MoE, Grounding Dino

New model additions

Llama 3

Llama 3 is supported in this release through the Llama 2 architecture and some fixes in the tokenizers library.

Idefics2

The Idefics2 model was created by the Hugging Face M4 team and authored by Léo Tronchon, Hugo Laurencon, Victor Sanh. The accompanying blog post can be found here.

Idefics2 is an open multimodal model that accepts arbitrary sequences of image and text inputs and produces text outputs. The model can answer questions about images, describe visual content, create stories grounded on multiple images, or simply behave as a pure language model without visual inputs. It improves upon IDEFICS-1, notably on document understanding, OCR, or visual reasoning. Idefics2 is lightweight (8 billion parameters) and treats images in their native aspect ratio and resolution, which allows for varying inference efficiency.

- Add Idefics2 by @amyeroberts in #30253

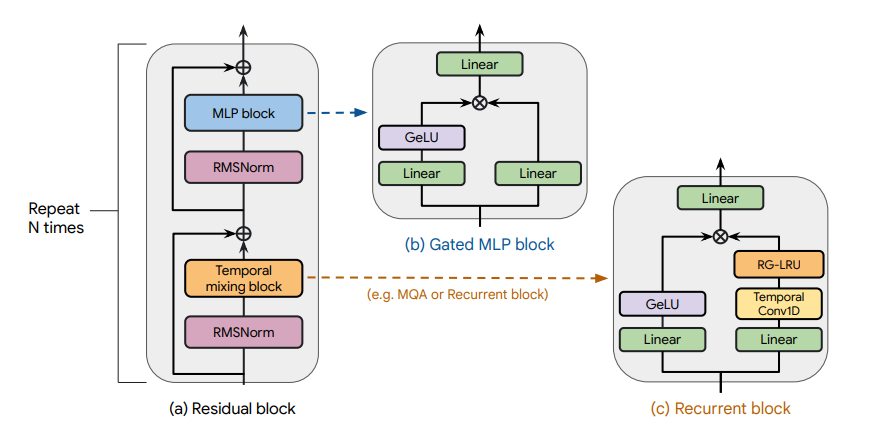

Recurrent Gemma

Recurrent Gemma architecture. Taken from the original paper.

The Recurrent Gemma model was proposed in RecurrentGemma: Moving Past Transformers for Efficient Open Language Models by the Griffin, RLHF and Gemma Teams of Google.

The abstract from the paper is the following:

We introduce RecurrentGemma, an open language model which uses Google’s novel Griffin architecture. Griffin combines linear recurrences with local attention to achieve excellent performance on language. It has a fixed-sized state, which reduces memory use and enables efficient inference on long sequences. We provide a pre-trained model with 2B non-embedding parameters, and an instruction tuned variant. Both models achieve comparable performance to Gemma-2B despite being trained on fewer tokens.

- Add recurrent gemma by @ArthurZucker in #30143

Jamba

Jamba is a pretrained, mixture-of-experts (MoE) generative text model, with 12B active parameters and an overall of 52B parameters across all experts. It supports a 256K context length, and can fit up to 140K tokens on a single 80GB GPU.

As depicted in the diagram below, Jamba’s architecture features a blocks-and-layers approach that allows Jamba to successfully integrate Transformer and Mamba architectures altogether. Each Jamba block contains either an attention or a Mamba layer, followed by a multi-layer perceptron (MLP), producing an overall ratio of one Transformer layer out of every eight total layers.

Jamba introduces the first HybridCache object that allows it to natively support assisted generation, contrastive search, speculative decoding, beam search and all of the awesome features from the generate API!

- Add jamba by @tomeras91 in #29943

DBRX

DBRX is a transformer-based decoder-only large language model (LLM) that was trained using next-token prediction. It uses a fine-grained mixture-of-experts (MoE) architecture with 132B total parameters of which 36B parameters are active on any input.

It was pre-trained on 12T tokens of text and code data. Compared to other open MoE models like Mixtral-8x7B and Grok-1, DBRX is fine-grained, meaning it uses a larger number of smaller experts. DBRX has 16 experts and chooses 4, while Mixtral-8x7B and Grok-1 have 8 experts and choose 2.

This provides 65x more possible combinations of experts and the authors found that this improves model quality. DBRX uses rotary position encodings (RoPE), gated linear units (GLU), and grouped query attention (GQA).

- Add DBRX Model by @abhi-mosaic in #29921

OLMo

The OLMo model was proposed in OLMo: Accelerating the Science of Language Models by Dirk Groeneveld, Iz Beltagy, Pete Walsh, Akshita Bhagia, Rodney Kinney, Oyvind Tafjord, Ananya Harsh Jha, Hamish Ivison, Ian Magnusson, Yizhong Wang, Shane Arora, David Atkinson, Russell Authur, Khyathi Raghavi Chandu, Arman Cohan, Jennifer Dumas, Yanai Elazar, Yuling Gu, Jack Hessel, Tushar Khot, William Merrill, Jacob Morrison, Niklas Muennighoff, Aakanksha Naik, Crystal Nam, Matthew E. Peters, Valentina Pyatkin, Abhilasha Ravichander, Dustin Schwenk, Saurabh Shah, Will Smith, Emma Strubell, Nishant Subramani, Mitchell Wortsman, Pradeep Dasigi, Nathan Lambert, Kyle Richardson, Luke Zettlemoyer, Jesse Dodge, Kyle Lo, Luca Soldaini, Noah A. Smith, Hannaneh Hajishirzi.

OLMo is a series of Open Language Models designed to enable the science of language models. The OLMo models are trained on the Dolma dataset. We release all code, checkpoints, logs (coming soon), and details involved in training these models.

- Add OLMo model family by @2015aroras in #29890

Qwen2MoE

Qwen2MoE is the new model series of large language models from the Qwen team. Previously, we released the Qwen series, including Qwen-72B, Qwen-1.8B, Qwen-VL, Qwen-Audio, etc.

Model Details

Qwen2MoE is a language model series including decoder language models of different model sizes. For each size, we release the base language model and the aligned chat model. Qwen2MoE has the following architectural choices:

Qwen2MoE is based on the Transformer architecture with SwiGLU activation, attention QKV bias, group query attention, mixture of sliding window attention and full attention, etc. Additionally, we have an improved tokenizer adaptive to multiple natural languages and codes.

Qwen2MoE employs Mixture of Experts (MoE) architecture, where the models are upcycled from dense language models. For instance, Qwen1.5-MoE-A2.7B is upcycled from Qwen-1.8B. It has 14.3B parameters in total and 2.7B activated parameters during runtime, while it achieves comparable performance with Qwen1.5-7B, with only 25% of the training resources.

- Add Qwen2MoE by @bozheng-hit in #29377

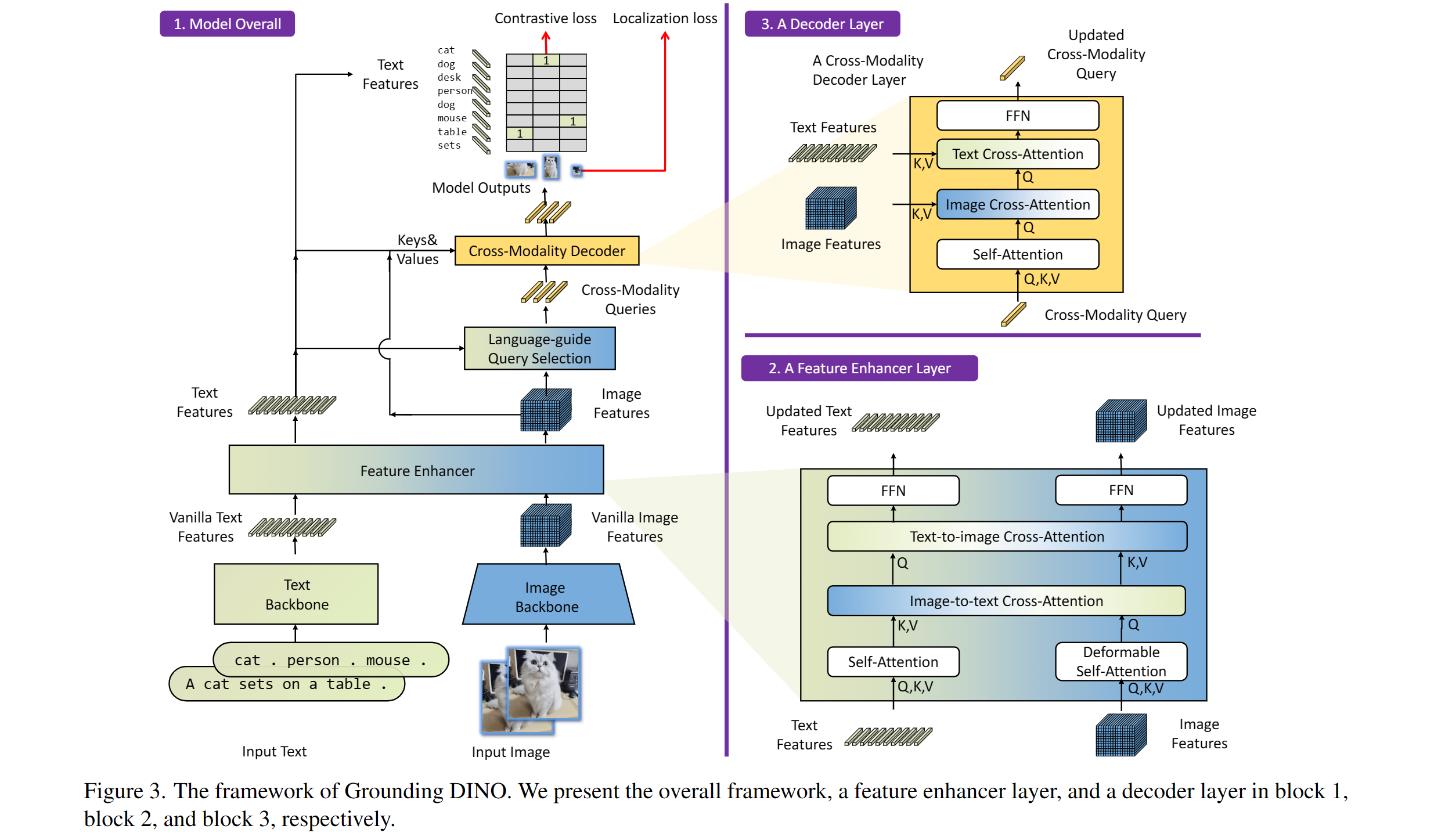

Grounding Dino

Taken from the original paper.

The Grounding DINO model was proposed in Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection by Shilong Liu, Zhaoyang Zeng, Tianhe Ren, Feng Li, Hao Zhang, Jie Yang, Chunyuan Li, Jianwei Yang, Hang Su, Jun Zhu, Lei Zhang. Grounding DINO extends a closed-set object detection model with a text encoder, enabling open-set object detection. The model achieves remarkable results, such as 52.5 AP on COCO zero-shot.

- Adding grounding dino by @EduardoPach in #26087

Static pretrained maps

Static pretrained maps have been removed from the library's internals and are currently deprecated. These used to reflect all the available checkpoints for a given architecture on the Hugging Face Hub, but their presence does not make sense in light of the huge growth of checkpoint shared by the community.

With the objective of lowering the bar of model contributions and reviewing, we first start by removing legacy objects such as this one which do not serve a purpose.

- Remove static pretrained maps from the library's internals by @LysandreJik in #29112

Notable improvements

Processors improvements

Processors are ungoing changes in order to uniformize them and make them clearer to use.

- Separate out kwargs in processor by @amyeroberts in #30193

- [Processor classes] Update docs by @NielsRogge in #29698

SDPA

Push to Hub for pipelines

Pipelines can now be pushed to Hub using a convenient push_to_hub method.

Flash Attention 2 for more models (M2M100, NLLB, GPT2, MusicGen) !

Thanks to the community contribution, Flash Attention 2 has been integrated for more architectures

- Adding Flash Attention 2 Support for GPT2 by @EduardoPach in #29226

- Add Flash Attention 2 support to Musicgen and Musicgen Melody by @ylacombe in #29939

- Add Flash Attention 2 to M2M100 model by @visheratin in #30256

Improvements and bugfixes

- [docs] Remove redundant

-andthefrom custom_tools.md by @windsonsea in #29767 - Fixed typo in quantization_config.py by @kurokiasahi222 in #29766

- OWL-ViT box_predictor inefficiency issue by @RVV-karma in #29712

- Allow

-OOmode fordocstring_decoratorby @matthid in #29689 - fix issue with logit processor during beam search in Flax by @giganttheo in #29636

- Fix docker image build for

Latest PyTorch + TensorFlow [dev]by @ydshieh in #29764 - [

LlavaNext] Fix llava next unsafe imports by @ArthurZucker in #29773 - Cast bfloat16 to float32 for Numpy conversions by @Rocketknight1 in #29755

- Silence deprecations and use the DataLoaderConfig by @muellerzr in #29779

- Add deterministic config to

set_seedby @muellerzr in #29778 - Add support for

torch_dtypein the run_mlm example by @jla524 in #29776 - Generate: remove legacy generation mixin imports by @gante in #29782

- Llama: always convert the causal mask in the SDPA code path by @gante in #29663

- Prepend

bos tokento Blip generations by @zucchini-nlp in #29642 - Change in-place operations to out-of-place in LogitsProcessors by @zucchini-nlp in #29680

- [

quality] update quality check to make sure we check imports 😈 by @ArthurZucker in #29771 - Fix type hint for train_dataset param of Trainer.init() to allow IterableDataset. Issue 29678 by @stevemadere in #29738

- Enable AMD docker buil...

Release v4.39.3

Patch release v4.39.2

Patch release v4.39.1

Release v4.39.0

v4.39.0

🚨 VRAM consumption 🚨

The Llama, Cohere and the Gemma model both no longer cache the triangular causal mask unless static cache is used. This was reverted by #29753, which fixes the BC issues w.r.t speed , and memory consumption, while still supporting compile and static cache. Small note, fx is not supported for both models, a patch will be brought very soon!

New model addition

Cohere open-source model

Command-R is a generative model optimized for long context tasks such as retrieval augmented generation (RAG) and using external APIs and tools. It is designed to work in concert with Cohere's industry-leading Embed and Rerank models to provide best-in-class integration for RAG applications and excel at enterprise use cases. As a model built for companies to implement at scale, Command-R boasts:

- Strong accuracy on RAG and Tool Use

- Low latency, and high throughput

- Longer 128k context and lower pricing

- Strong capabilities across 10 key languages

- Model weights available on HuggingFace for research and evaluation

- Cohere Model Release by @saurabhdash2512 in #29622

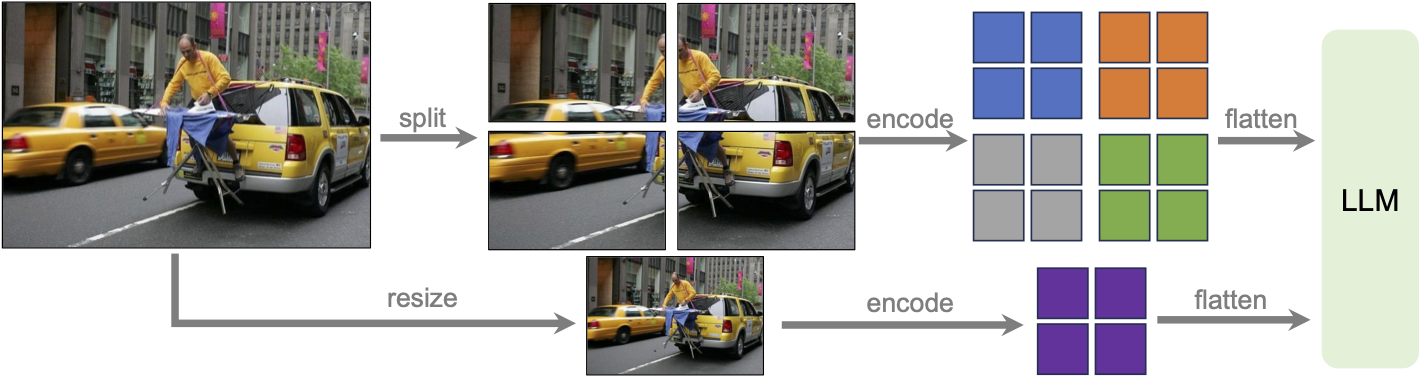

LLaVA-NeXT (llava v1.6)

Llava next is the next version of Llava, which includes better support for non padded images, improved reasoning, OCR, and world knowledge. LLaVA-NeXT even exceeds Gemini Pro on several benchmarks.

Compared with LLaVA-1.5, LLaVA-NeXT has several improvements:

- Increasing the input image resolution to 4x more pixels. This allows it to grasp more visual details. It supports three aspect ratios, up to 672x672, 336x1344, 1344x336 resolution.

- Better visual reasoning and OCR capability with an improved visual instruction tuning data mixture.

- Better visual conversation for more scenarios, covering different applications.

- Better world knowledge and logical reasoning.

- Along with performance improvements, LLaVA-NeXT maintains the minimalist design and data efficiency of LLaVA-1.5. It re-uses the pretrained connector of LLaVA-1.5, and still uses less than 1M visual instruction tuning samples. The largest 34B variant finishes training in ~1 day with 32 A100s.*

LLaVa-NeXT incorporates a higher input resolution by encoding various patches of the input image. Taken from the original paper.

MusicGen Melody

The MusicGen Melody model was proposed in Simple and Controllable Music Generation by Jade Copet, Felix Kreuk, Itai Gat, Tal Remez, David Kant, Gabriel Synnaeve, Yossi Adi and Alexandre Défossez.

MusicGen Melody is a single stage auto-regressive Transformer model capable of generating high-quality music samples conditioned on text descriptions or audio prompts. The text descriptions are passed through a frozen text encoder model to obtain a sequence of hidden-state representations. MusicGen is then trained to predict discrete audio tokens, or audio codes, conditioned on these hidden-states. These audio tokens are then decoded using an audio compression model, such as EnCodec, to recover the audio waveform.

Through an efficient token interleaving pattern, MusicGen does not require a self-supervised semantic representation of the text/audio prompts, thus eliminating the need to cascade multiple models to predict a set of codebooks (e.g. hierarchically or upsampling). Instead, it is able to generate all the codebooks in a single forward pass.

PvT-v2

The PVTv2 model was proposed in PVT v2: Improved Baselines with Pyramid Vision Transformer by Wenhai Wang, Enze Xie, Xiang Li, Deng-Ping Fan, Kaitao Song, Ding Liang, Tong Lu, Ping Luo, and Ling Shao. As an improved variant of PVT, it eschews position embeddings, relying instead on positional information encoded through zero-padding and overlapping patch embeddings. This lack of reliance on position embeddings simplifies the architecture, and enables running inference at any resolution without needing to interpolate them.

- Add PvT-v2 Model by @FoamoftheSea in #26812

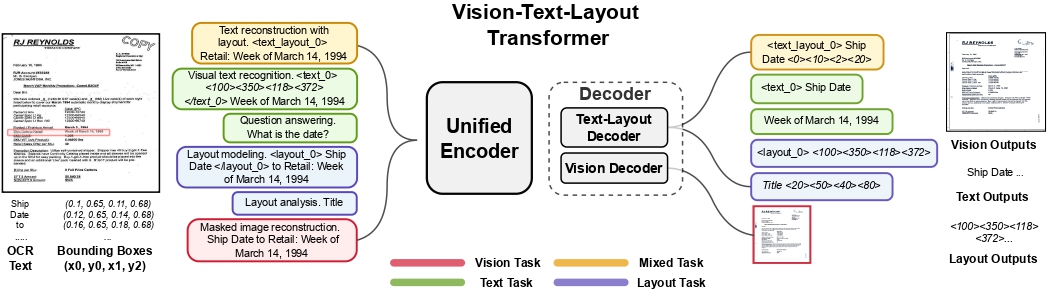

UDOP

The UDOP model was proposed in Unifying Vision, Text, and Layout for Universal Document Processing by Zineng Tang, Ziyi Yang, Guoxin Wang, Yuwei Fang, Yang Liu, Chenguang Zhu, Michael Zeng, Cha Zhang, Mohit Bansal. UDOP adopts an encoder-decoder Transformer architecture based on T5 for document AI tasks like document image classification, document parsing and document visual question answering.

UDOP architecture. Taken from the original paper.

- Add UDOP by @NielsRogge in #22940

Mamba

This model is a new paradigm architecture based on state-space-models, rather than attention like transformer models.

The checkpoints are compatible with the original ones

- [

Add Mamba] Adds support for theMambamodels by @ArthurZucker in #28094

StarCoder2

StarCoder2 is a family of open LLMs for code and comes in 3 different sizes with 3B, 7B and 15B parameters. The flagship StarCoder2-15B model is trained on over 4 trillion tokens and 600+ programming languages from The Stack v2. All models use Grouped Query Attention, a context window of 16,384 tokens with a sliding window attention of 4,096 tokens, and were trained using the Fill-in-the-Middle objective.

- Starcoder2 model - bis by @RaymondLi0 in #29215

SegGPT

The SegGPT model was proposed in SegGPT: Segmenting Everything In Context by Xinlong Wang, Xiaosong Zhang, Yue Cao, Wen Wang, Chunhua Shen, Tiejun Huang. SegGPT employs a decoder-only Transformer that can generate a segmentation mask given an input image, a prompt image and its corresponding prompt mask. The model achieves remarkable one-shot results with 56.1 mIoU on COCO-20 and 85.6 mIoU on FSS-1000.

- Adding SegGPT by @EduardoPach in #27735

Galore optimizer

With Galore, you can pre-train large models on consumer-type hardwares, making LLM pre-training much more accessible to anyone from the community.

Our approach reduces memory usage by up to 65.5% in optimizer states while maintaining both efficiency and performance for pre-training on LLaMA 1B and 7B architectures with C4 dataset with up to 19.7B tokens, and on fine-tuning RoBERTa on GLUE tasks. Our 8-bit GaLore further reduces optimizer memory by up to 82.5% and total training memory by 63.3%, compared to a BF16 baseline. Notably, we demonstrate, for the first time, the feasibility of pre-training a 7B model on consumer GPUs with 24GB memory (e.g., NVIDIA RTX 4090) without model parallel, checkpointing, or offloading strategies.

Galore is based on low rank approximation of the gradients and can be used out of the box for any model.

Below is a simple snippet that demonstrates how to pre-train mistralai/Mistral-7B-v0.1 on imdb:

import torch

import datasets

from transformers import TrainingArguments, AutoConfig, AutoTokenizer, AutoModelForCausalLM

import trl

train_dataset = datasets.load_dataset('imdb', split='train')

args = TrainingArguments(

output_dir="./test-galore",

max_steps=100,

per_device_train_batch_size=2,

optim="galore_adamw",

optim_target_modules=["attn", "mlp"]

)

model_id = "mistralai/Mistral-7B-v0.1"

config = AutoConfig.from_pretrained(model_id)

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_config(config).to(0)

trainer = trl.SFTTrainer(

model=model,

args=args,

train_dataset=train_dataset,

dataset_text_field='text',

max_seq_length=512,

)

trainer.train()Quantization

Quanto integration

Quanto has been integrated with transformers ! You can apply simple quantization algorithms with few lines of code with tiny changes. Quanto is also compatible with torch.compile

Check out the announcement blogpost for more details

Exllama 🤝 AWQ

Exllama and AWQ combined together for faster AWQ inference - check out the relevant documentation section for more details on how to use Exllama + AWQ.

- Exllama kernels support for AWQ models by @IlyasMoutawwakil in #28634

MLX Support

Allow models saved or fine-tuned with Apple’s MLX framework to be loaded in transformers (as long as the model parameters use the same names), and improve tensor interoperability. This leverages MLX's adoption of safetensors as their checkpoint format.

- Add mlx support to BatchEncoding.convert_to_tensors by @Y4hL in #29406

- Add support for metadata format MLX by @alexweberk in #29335

- Typo in mlx tensor support by @pcuenca in #29509

- Experimental loading of MLX files by @pcuenca in #29511

Highligted improvements

Notable memory reduction in Gemma/LLaMa by changing the causal mask buffer type from int64 to boolean.

Remote code improvements

- Allow remote code repo names to contain "." by @Rocketknight1 in #29175

- simplify get_class_in_m...

v4.38.2

Fix backward compatibility issues with Llama and Gemma:

We mostly made sure that performances are not affected by the new change of paradigm with ROPE. Fixed the ROPE computation (should always be in float32) and the causal_mask dtype was set to bool to take less RAM.

YOLOS had a regression, and Llama / T5Tokenizer had a warning popping for random reasons

- FIX [Gemma] Fix bad rebase with transformers main (#29170)

- Improve _update_causal_mask performance (#29210)

- [T5 and Llama Tokenizer] remove warning (#29346)

- [Llama ROPE] Fix torch export but also slow downs in forward (#29198)

- RoPE loses precision for Llama / Gemma + Gemma logits.float() (#29285)

- Patch YOLOS and others (#29353)

- Use torch.bool instead of torch.int64 for non-persistant causal mask buffer (#29241)